Something shifted in software development between 2024 and 2026 that doesn’t show up in the adoption statistics. The headline number — 84% of developers now use or plan to use AI tools, up from 44% in 2023 — makes this look like a steady linear climb. It isn’t. The nature of what developers are doing with AI changed fundamentally: from accepting autocomplete suggestions to defining goals and letting agents execute them across entire codebases. That’s not a quantitative shift. It’s architectural.

From Autocomplete to Agent: The Spectrum That Matters

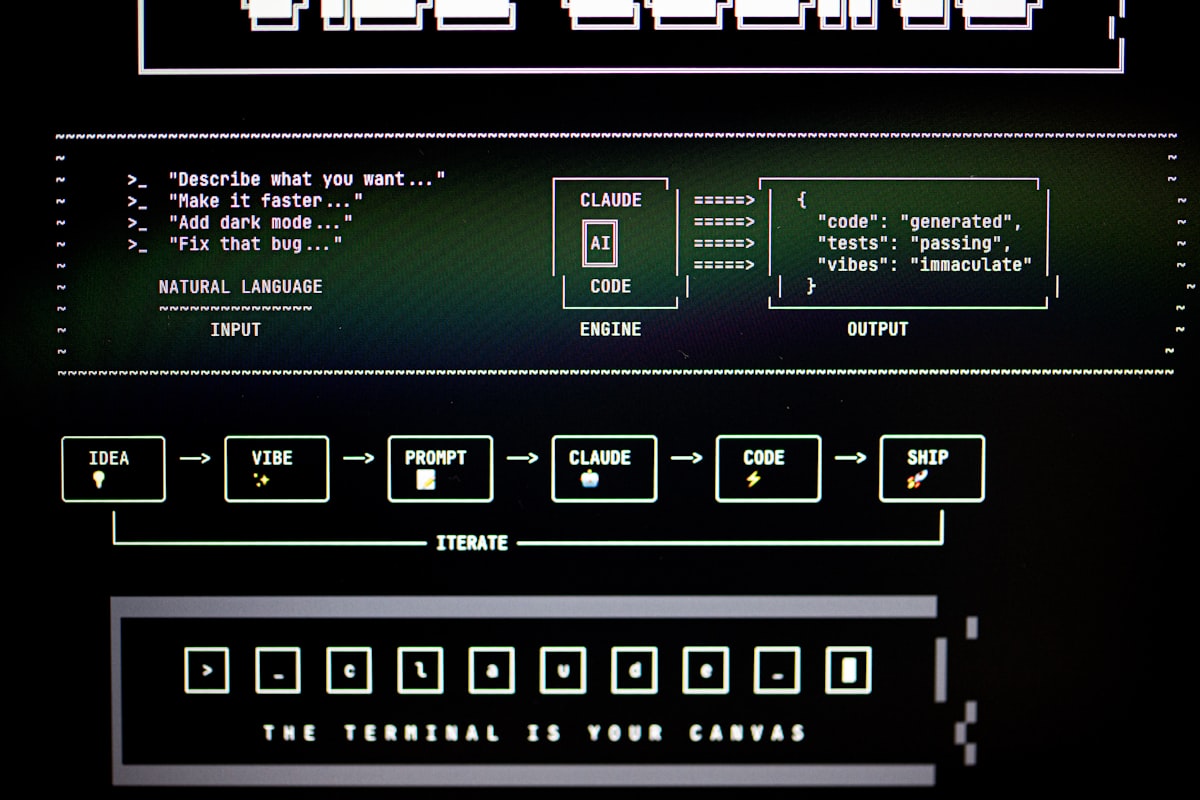

There are four distinct levels of AI agency in coding tools, and most conversations about “AI in development” conflate all of them. Understanding where a tool sits on this spectrum determines what kind of developer behavior it demands:

- Autocomplete — inline suggestions as you type. Requires constant micro-decisions but no significant workflow change.

- Code generation — natural language to code in a chat interface. Developer maintains full control; AI is a fast lookup and draft engine.

- Agentic task execution — tools like GitHub Copilot Workspace and Cursor’s agent mode. Developer describes a goal; AI plans and implements across multiple files, runs tests, and iterates. Developer reviews and approves rather than writes.

- Autonomous execution — agents like Devin, Claude Code, and Amazon Q Developer handling end-to-end tasks: navigating the codebase, executing commands, managing dependencies, and validating outputs. Developer defines scope and evaluates results.

Most engineering organizations in 2026 are at levels 2–3. The tools for level 4 exist and are production-capable for specific task types, but the workflows, review processes, and governance models to use them safely at scale are still being defined in real time.

The Numbers Behind the Shift

GitHub’s Octoverse 2024 report documented that AI-assisted pull requests increased 248% year over year — the highest single-year acceleration in the report’s history. GitHub reported Copilot crossed 1.8 million paid subscribers in 2025, with developers completing tasks 55% faster on average and 88% reporting it helps them stay in flow state. Cursor, the AI-native VS Code fork, reached $100M ARR in early 2024 — the fastest B2B SaaS product to hit that milestone, with adoption concentrated at OpenAI, Stripe, and Perplexity among others.

On the autonomous end, SWE-bench — the benchmark measuring an AI’s ability to autonomously resolve real GitHub issues — tells a useful story: when Devin launched in 2024, it was the first agent to exceed 10%. By 2026, top models score above 50% on SWE-bench Verified. That’s a 5x capability improvement in two years. The catch — and it matters for production decisions — is that SWE-bench tasks are isolated, clean, and well-documented. Real codebases aren’t.

The Orchestrator Shift

In February 2025, Andrej Karpathy coined the term “vibe coding” to describe a mode of development where engineers fully surrender to AI and stop reading the code they deploy. He framed it as a playful experiment. But the concept crystallized something real: the direction of travel is toward developers as orchestrators — defining intent, evaluating outputs, managing agent workflows — rather than as line-by-line implementers.

JetBrains’ Developer Ecosystem Report found that 71% of developers believe AI will fundamentally change how software is built within three years, and 39% already describe their workflow as “AI-first.” The scarcest resource in software development is shifting from implementation time to architectural judgment. Tasks that required 2–3 days of focused engineering effort are now completed in hours. The ceiling on individual output has risen — which means the bottleneck is no longer writing code. It’s deciding what to build, and whether what the agent built is actually correct.

The Productivity Paradox (and What It Reveals)

Here’s the finding that engineering leaders need to internalize: AI tools improve individual developer throughput substantially, but team-level delivery velocity improves less than the individual numbers suggest. The DORA State of DevOps 2024 report identified the underlying mechanism — bottlenecks shift from writing code to reviewing AI-generated code, managing integration risk, and maintaining coherent architectural decisions across AI-assisted changes.

Research has found that AI-generated code requires more review time per line than human-written code, due to subtle semantic errors that pass linting and type-checking but fail architectural intent. The net implication: AI tools are most valuable when teams redesign their review processes, test coverage, and deployment pipelines alongside their coding tools — not instead of them.

This is also the diagnostic for which engineering organizations will capture the most value from agentic coding tools. Three factors predict success: comprehensive test coverage (so agents can validate their own changes autonomously), clear architectural documentation (so agents have the context they need to make correct decisions), and fast CI/CD loops (so agent-generated changes get rapid, honest feedback). Organizations missing these foundations will generate more code faster — without generating more working software faster.

The Enterprise Agentic Layer

For engineering organizations operating at scale, the tools worth tracking are the ones that bring agentic capability with enterprise-grade guardrails. Amazon Q Developer integrates into AWS, JetBrains, and VS Code with security scanning, code transformation, and autonomous task completion tied to AWS infrastructure — with audit trails and permission scoping built in. Claude Code operates as a terminal-native coding agent that navigates codebases, executes commands, and manages files within developer-defined boundaries.

The pattern across enterprise agentic tools is the same: the goal isn’t to remove developers from the loop, but to push the loop to a higher level of abstraction. Developers define constraints, review architectural decisions, and maintain ownership of quality — agents handle the mechanical work of implementation, verification, and iteration within those constraints.

What This Means for Engineering Teams in 2026

The organizations extracting the most value from agentic coding in 2026 aren’t the ones with the highest tool adoption rates. They’re the ones who’ve made deliberate decisions about which level of agency to operate at, for which types of tasks, with which oversight processes in place. That’s not a technology decision — it’s a software engineering maturity decision.

The practical implication: start with the workflow, not the tool. What tasks in your current engineering process are mechanical, well-defined, and have clear validation criteria? Those are the candidates for agentic execution. What tasks require architectural judgment, stakeholder context, or tribal knowledge that isn’t in your codebase? Those stay human-led — and become more important, not less, as agents handle more of the rest.

The shift isn’t from engineers to AI. It’s from engineers who write code to engineers who architect systems that include AI as a first-class component. That’s a meaningful distinction — and 2026 is the year most engineering organizations will have to decide which side of it they want to be on.